This week’s releases share a quiet assumption: that AI has already won the argument. Nobody is debating whether to build with it anymore. The question everyone is answering now is where it belongs. Inside the operating system of the internet, if you’re WordPress. Inside the tool a billion people already open for directions, if you’re Google. Inside the creative environment where code gets written and shipped, if you’re Replit. Inside the agent stack that enterprises will eventually have to govern, if you’re Nvidia. The integration race is underway, and the teams that move fastest aren’t necessarily the ones with the best models.

Listen to the AI-Powered Audio Recap

This AI-generated podcast is based on our editor team’s AI This Week posts. We use advanced tools like Google NotebookLM, Descript, and Elevenlabs to turn written insights into an engaging audio experience. While the process is AI-assisted, our team ensures each episode meets our quality standards. We’d love your feedback—let us know how we can make it even better.

TL;DR

- Nvidia announced NemoClaw, an enterprise-hardened platform built on OpenClaw, positioning itself as the governance layer for the agentic AI stack.

- Picsart launched an agent marketplace that lets creators delegate tasks to specialized AI assistants, with Shopify integration and WhatsApp/Telegram access.

- Replit released Agent 4, shifting focus from autonomous execution to creative flow, with parallel task execution and a unified design-and-build environment.

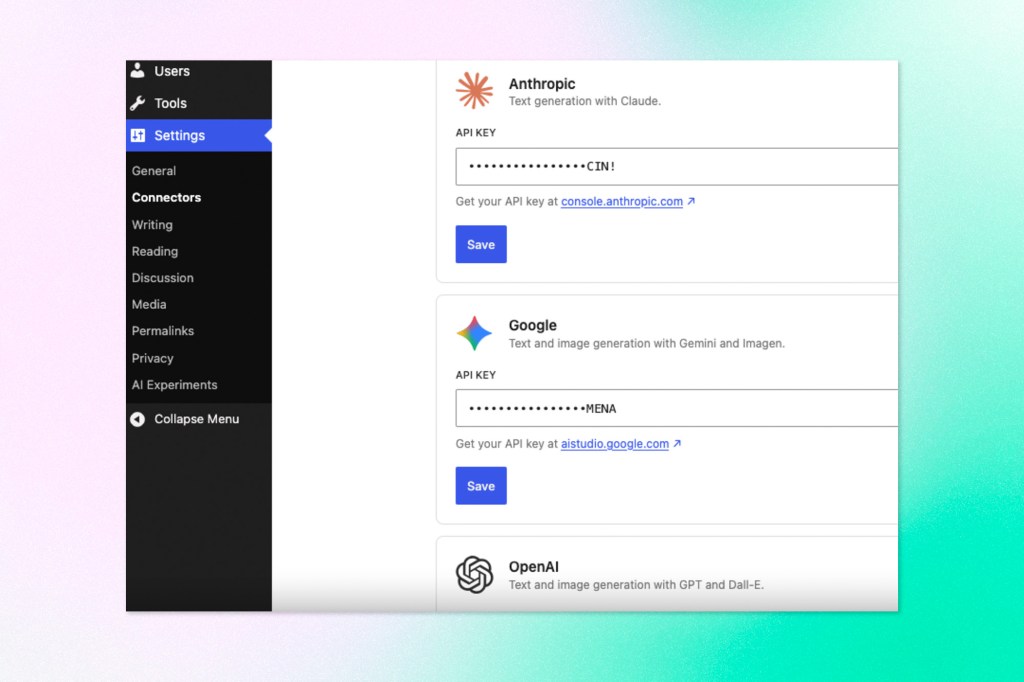

- WordPress 0.5.0 aligns the AI Experiments plugin with the upcoming 7.0 release, which lands native AI infrastructure directly in core on April 9th.

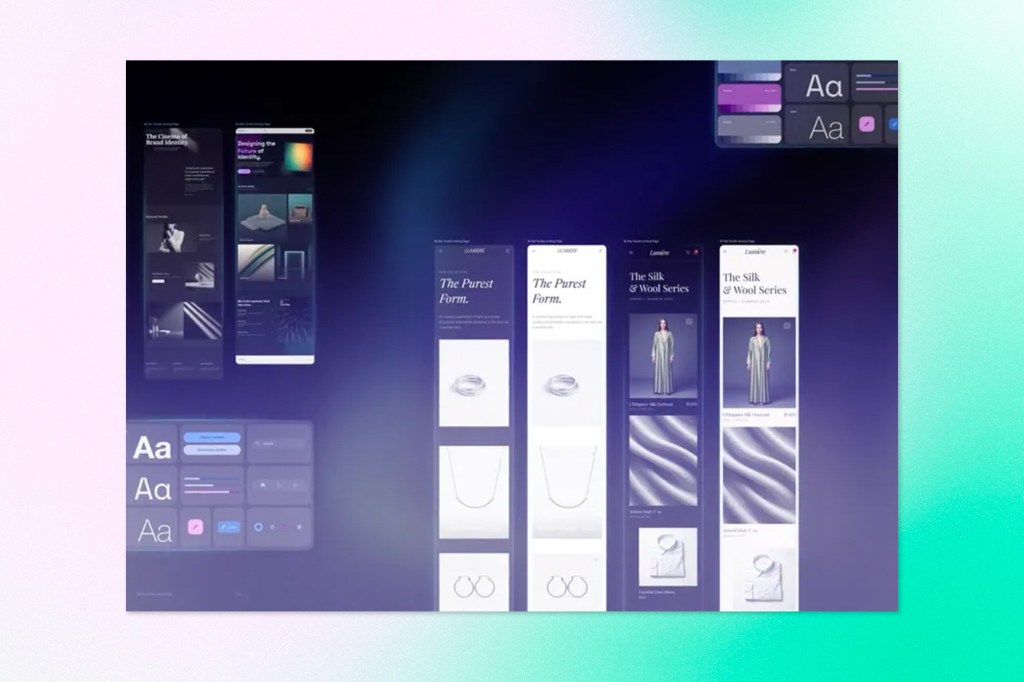

- Google Stitch launched a full redesign as an AI-native design canvas, introducing voice interaction, parallel agent management, a portable DESIGN.md design system format, and MCP-based developer handoff

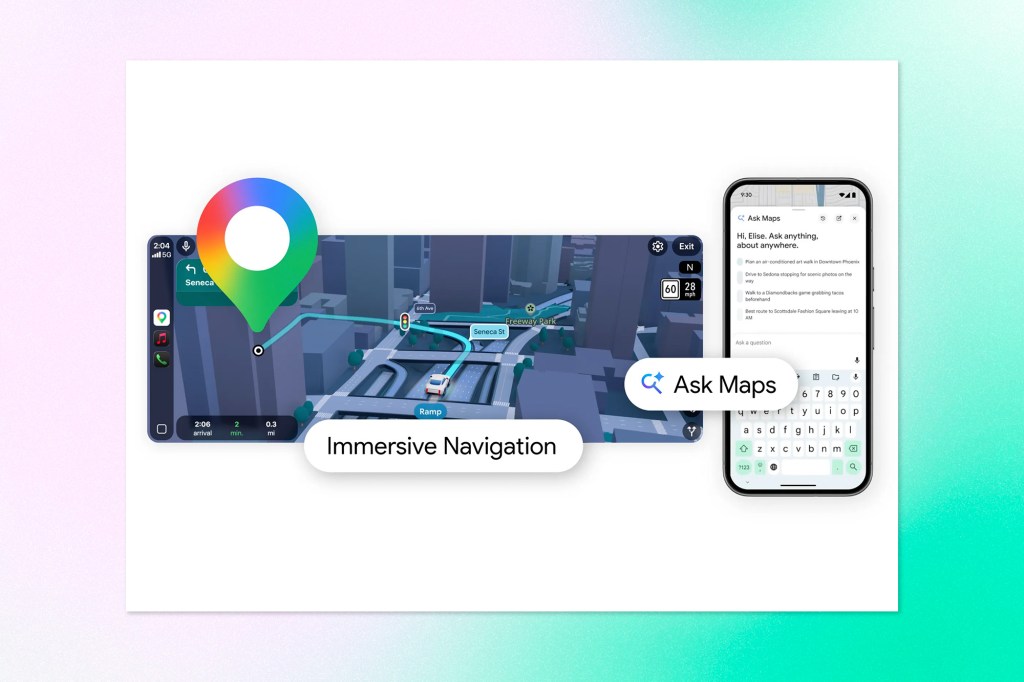

- Google Maps launched Ask Maps and Immersive Navigation, its most significant update in over a decade, bringing Gemini-powered conversational discovery and a rebuilt 3D driving experience.

🤖 The Agent Stack

Nvidia Wants to Be the Enterprise Layer for AI Agents

Jensen Huang used his GTC keynote this week to make a sweeping claim: every company needs an OpenClaw strategy. And conveniently, Nvidia is ready to sell them one.

The announcement was NemoClaw, an enterprise-focused platform built on top of OpenClaw, the open-source framework for building and running AI agents on local hardware. The pitch is straightforward: take the flexibility and momentum that OpenClaw has built in the developer community and wrap it in the security, privacy controls, and governance guardrails that enterprise IT teams actually require before they’ll let agents anywhere near production systems.

NemoClaw was developed in collaboration with OpenClaw creator Peter Steinberger and supports any coding agent or open-source model, including Nvidia’s own NemoTron family. It can pull from cloud-based models while running locally, and notably doesn’t require Nvidia hardware to function.

The platform is currently in early alpha, with Nvidia openly acknowledging rough edges and positioning this as a starting point rather than a finished product.

Huang leaned heavily on historical analogy during the keynote, placing OpenClaw alongside Linux, Kubernetes, and HTTP as the kind of foundational open-source infrastructure that entire industry waves get built on. It’s a bold frame, but not an irrational one, given how quickly agentic AI is moving from demo to deployment conversation.

Why it matters: Enterprise adoption of AI agents has been consistently blocked by one thing: trust. Not trust in the models themselves, but trust in the infrastructure around them. Who controls the data? What are the guardrails on agent behaviour? What happens when something goes wrong? NemoClaw is Nvidia’s answer to those questions, and it signals something bigger than a single product launch. The GPU giant is making a deliberate move to own the enterprise agent stack, not just the silicon underneath it. With OpenAI’s Frontier platform already in market and every major vendor racing to become the governance layer for agentic AI, Nvidia is staking its claim that open-source infrastructure, combined with enterprise hardening, is the winning formula. If that bet is right, NemoClaw could become table stakes for enterprise AI deployment the same way Kubernetes became table stakes for cloud infrastructure.

Picsart Lets Creators Hire AI Agents Instead of Running Every Workflow Themselves

Picsart, the AI-powered design platform with over 130 million users globally, is launching an agent marketplace that reframes how creators interact with the tool. Rather than manually executing every task, users can delegate to specialized AI assistants built for specific jobs.

The initial lineup includes four agents: Flair, which integrates directly with Shopify to analyze store performance and recommend improvements to product photography and presentation; Resize Pro, which handles reformatting images and video for different platform dimensions, using generative AI to extend frames rather than just cropping; Remix, which applies a chosen aesthetic style across an entire photo library in bulk; and Swap, which handles background replacement at scale.

The Shopify integration for Flair is the most ambitious of the four. Eventually, it will run A/B tests and surface underperforming products proactively, though that functionality isn’t live yet. All agents can be accessed through WhatsApp and Telegram, a practical choice for creators who manage content on the go and don’t want to context-switch to a separate app.

Picsart is building in some guardrails. Users can set autonomy levels that require approval before an agent takes any action, which matters given the hallucination risk inherent to any LLM-based workflow. The platform’s relatively controlled environment, walled off from direct customer or internet interaction, also limits prompt injection exposure compared to more publicly facing agent deployments.

Agent access is largely gated behind paid plans, which start around $10 per month.

Why it matters: Picsart is doing something quietly significant here: translating the agentic AI concept into something a social media manager or small business owner can actually use without needing to understand what an agent is. The “hire” framing is deliberate. It positions AI delegation as a workflow decision rather than a technical one, which is exactly the kind of language that drives adoption outside the developer and enterprise audiences everyone else is chasing. For a platform whose core users are Gen Z creators and indie operators, this is a sharp positioning move. Whether the agents perform reliably enough to earn that trust is another question, but the interface model is one more signal that agentic AI is moving fast from infrastructure conversation to consumer product.

Replit’s Agent 4 Focuses on Flow Over Autonomy

Replit has launched Agent 4, the latest version of its AI coding assistant, and the headline improvement isn’t raw capability so much as a rethought relationship between the human and the machine.

Where Agent 3 pushed toward autonomy, running independently for extended stretches and self-correcting as it went, Agent 4 shifts the emphasis toward creative control. The idea is that once the mechanics of building can run on their own, the more interesting problem becomes keeping the person doing the actual thinking in a state of flow rather than coordination.

A few concrete changes make that possible. Design and development now live in the same environment, so you can explore visual variants on an infinite canvas while the agent builds in the background, then apply what you like directly to production code without switching tools. Parallel task execution lets multiple agents work on independent parts of a project simultaneously, with progress visible and results reviewable before anything merges into the main codebase. For larger tasks, Agent 4 can decompose them automatically, run sub-agents on the pieces, and reconcile the outputs. And the scope of what you can build in a single project has expanded beyond apps to include decks, animations, data apps, and mobile experiences, all sharing the same context and design system.

Agent 4 also integrates with external tools like Linear, Notion, and Databricks, letting users query and take action across connected services through natural language. Parallel execution is gated to Pro and Enterprise tiers, though Replit is making it temporarily available to Core users around the launch.

Why it matters: Replit is making a deliberate argument here that the bottleneck in AI-assisted development isn’t the model, it’s the environment. That’s a meaningful distinction. Most of the agentic AI conversation has focused on what models can do autonomously; Replit is focusing on what the surrounding infrastructure needs to look like for a human and an agent to actually work well together. The parallel execution model, in particular, starts to look less like a coding tool and more like a small development team that happens to run in a browser. For founders, PMs, and small teams building production software without a large engineering headcount, that framing has real appeal.

🟦 Platform & Infrastructure

WordPress Is Building AI Into Core, and Version 7.0 Is the Turning Point

The WordPress AI Experiments plugin has released version 0.5.0, and the update is less about new features than it is about positioning. The release is largely preparatory, aligning the plugin with WordPress 7.0, which arrives April 9th and brings native AI infrastructure directly into core for the first time.

The practical change in 0.5.0 is that the plugin has dropped its own standalone AI client dependencies and now relies on WordPress core’s built-in AI layer instead. Credentials previously managed through the plugin’s own settings screen are migrated to a new Connectors screen in core, centralizing how AI providers are configured across a WordPress install. The plugin now also requires WordPress 7.0 as its minimum version, which means users on 6.9 will need to wait for the official release or test a beta build before upgrading.

The roadmap for version 0.6.0 is where things get more interesting. Planned work includes image editing with generative fill, erase-and-replace, and background removal; a “Refine from Notes” feature that updates post content based on editorial feedback; contextual tagging that suggests categories and tags based on content; and content provenance tracking through C2PA. The plugin itself may also be renamed from AI Experiments to WordPress AI, with some experiments elevated to full features.

Earlier-stage explorations include type-ahead suggestions, content moderation, an AI Playground, and deeper MCP integration.

Why it matters: For anyone building on WordPress at an enterprise level, this is the release cycle worth watching. When AI capabilities land in WordPress core rather than living in a plugin layer, they become part of the platform contract. That changes the calculus for enterprise clients evaluating WordPress against competitors: AI isn’t a bolt-on anymore, it’s infrastructure. The Connectors architecture also signals a deliberate move toward provider flexibility rather than locking into a single model vendor, which is exactly the kind of governance-friendly design that enterprise IT teams need to see before they’ll approve a platform direction. WordPress 7.0 and the maturation of this plugin are quietly significant milestones.

🎨 Design & Build

Google Stitch Officially Becomes an AI-Native Design Canvas

Google has formally announced the next evolution of Stitch, rebranding its direction around what it’s calling “vibe design” — a natural language-first approach to building high-fidelity UI that doesn’t require starting from a wireframe or a blank artboard.

The centrepiece of the update is a rebuilt infinite canvas designed to hold everything from early sketches to working prototypes in one place, accepting images, text, and code as input. A new design agent reasons across the full history of a project rather than treating each prompt in isolation, and a companion Agent Manager lets users run multiple design directions in parallel without losing track of where things stand.

Design system support gets a meaningful upgrade through DESIGN.md, an agent-readable markdown file that captures and exports design rules so they can be carried across projects or imported into other tools. You can also extract a design system directly from any URL, which closes a gap that previously required significant manual work to bridge.

Prototyping is now immediate: static screens can be stitched together and previewed as an interactive flow in seconds, with Stitch able to generate logical next screens automatically based on navigation paths. Voice is also live, letting users speak directly to the canvas for real-time critiques, palette variations, or layout alternatives without breaking their working rhythm.

For handoff, Stitch connects to developer tools like AI Studio via MCP server and SDK, with design exports keeping the design-to-development pipeline synchronized.

Why it matters: The design-to-code gap has been one of the most persistent friction points in product development, and every major platform is racing to close it. What makes the Stitch direction notable is the combination of voice interaction, parallel agent management, and a portable design system standard in a single tool. DESIGN.md in particular is worth watching — if it gets traction as a shared format across design and coding tools, it could become the kind of quiet standard that reshapes how design systems travel between environments. Google I/O 2026 is still six weeks away, and this is already one of the more coherent end-to-end design stories in the market.

📍 AI in the Wild

Google Maps Gets Its Biggest Overhaul in a Decade

Google is pushing Gemini into Maps in a meaningful way, with two distinct upgrades launching this week that together reframe what a navigation app is actually for.

The first is Ask Maps, a conversational layer that lets users ask the kind of context-heavy questions that traditional search has never handled well. Rather than typing a category and scrolling through pins, you can describe a specific situation and get a tailored answer, drawing on data from over 300 million places and reviews from more than 500 million contributors. The results are personalized based on your search and save history, so recommendations factor in preferences you’ve already expressed without you having to restate them. From the answer, you can book, save, share, or navigate in a few taps. Ask Maps is rolling out now in the US and India on Android and iOS.

The second is Immersive Navigation, described as the largest redesign of the driving experience in over a decade. The map shifts to a vivid 3D view built from Street View imagery and aerial photos processed through Gemini models, surfacing real-world details like lane markings, traffic lights, overpasses, and landmarks as they actually appear. Voice guidance is rewritten to sound more like a person talking you through a route than a robotic instruction sequence. The update also introduces clearer tradeoff summaries when alternate routes are available, real-time disruption alerts sourced from community drivers, and destination previews that guide you from your last turn to the front door.

Why it matters: Navigation has been a largely solved problem for years, which is why this update is worth paying attention to. Google isn’t just polishing the interface; it’s making a case that Gemini’s ability to reason over unstructured, real-world information unlocks genuinely new functionality rather than incremental improvement. Ask Maps in particular represents a shift in the mental model: from a map you consult to a local expert you talk to. For Google, embedding Gemini this deeply into a product used by over a billion people is also a distribution play. It normalizes conversational AI interaction in a context where the value is immediately legible, which is harder to do with a chatbot that starts from a blank prompt.

Keep ahead of the curve – join our community today!

Follow us for the latest discoveries, innovations, and discussions that shape the world of artificial intelligence.