The theme running through this week isn’t any single product launch. It’s consolidation. OpenAI bought a security firm and shipped a flagship model. Anthropic built a marketplace and gave its code agent a review team. Google deepened its grip on the tools people already use every day. Adobe made its creative suite more conversational. And a16z published a report that reframes the whole competition, not as a model race, but as a platform war with ecosystems, switching costs, and geography all in play. The pace hasn’t slowed. If anything, the moves are getting more deliberate.

Listen to the AI-Powered Audio Recap

This AI-generated podcast is based on our editor team’s AI This Week posts. We use advanced tools like Google NotebookLM, Descript, and Elevenlabs to turn written insights into an engaging audio experience. While the process is AI-assisted, our team ensures each episode meets our quality standards. We’d love your feedback—let us know how we can make it even better.

TL;DR

- OpenAI acquired Promptfoo, folding enterprise AI security and red-teaming tools into its Frontier platform.

- OpenAI released GPT-5.4, its most capable reasoning model yet, then its CEO publicly flagged three things that still need fixing.

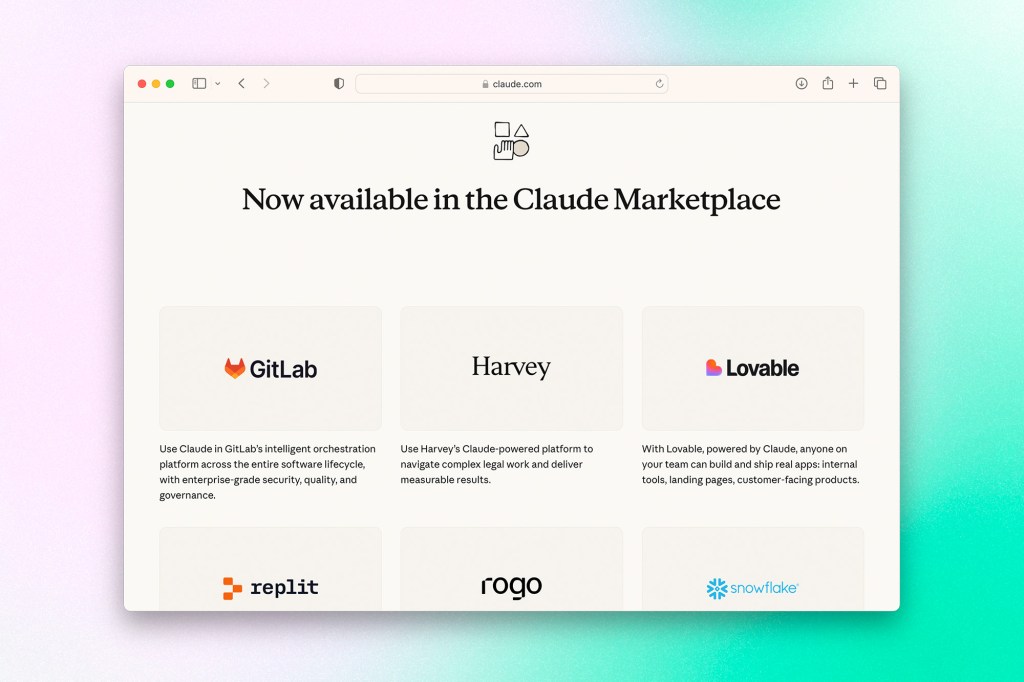

- Anthropic launched Claude Marketplace, letting enterprises apply existing Anthropic spend toward Claude-powered partner tools from GitLab, Harvey, Replit, and others.

- Claude Code now dispatches a team of agents to review every pull request, a system Anthropic has been running internally for months.

- Google rolled out expanded Gemini features across Docs, Sheets, Slides, and Drive, pulling context from Gmail, Calendar, and files to generate first drafts.

- Adobe launched a public beta of an AI Assistant in Photoshop and expanded Firefly’s image editor with multi-model support.

- A16Z’s sixth edition of the top 100 consumer AI apps finds ChatGPT still dominant, Claude and Gemini growing fast, and the US ranking 20th globally in per capita AI adoption.

🚀 Model Updates

OpenAI Releases GPT-5.4, Then Acknowledges Its Gaps

OpenAI has released GPT-5.4 as its new flagship reasoning model, rolling it out across ChatGPT, the API, and Codex. The model consolidates capabilities from previous fifth-generation releases, combining the coding strength of GPT-5.3-Codex with improved performance on knowledge work, computer use, and multi-step agentic workflows. On professional task benchmarks, GPT-5.4 matched or outperformed industry professionals in 83% of comparisons, up from 71% for GPT-5.2. Computer use saw a particularly sharp jump, with the model achieving a 75% success rate on the OSWorld desktop navigation benchmark, surpassing human performance at 72%. OpenAI also introduced a tool search capability that lets agents look up tool definitions on demand rather than loading all of them into context upfront, cutting token usage by 47% in testing. The model is available to Plus, Team, and Pro ChatGPT subscribers, with a Pro variant available for maximum performance on complex tasks.

Shortly after launch, CEO Sam Altman posted that GPT-5.4 was his favourite model to talk to, while simultaneously acknowledging three areas still needing work: frontend design taste in generated interfaces, missing real-world context in planning tasks, and a tendency to stop short before completing tasks in agentic workflows. Altman committed to fixing all three, framing the post-launch candour as part of a broader effort to get ChatGPT’s personality and tone right after a period where users felt the fifth-generation models had lost something compared to GPT-4o.

Why it matters: The dual storyline here is worth paying attention to. On one hand, GPT-5.4 represents a genuine consolidation of OpenAI’s recent advances into a single model, and the computer use numbers in particular suggest meaningful progress toward agents that can operate software reliably. On the other hand, the fact that Altman felt compelled to publicly acknowledge personality and aesthetic shortcomings points to something the benchmarks don’t capture: user satisfaction is not purely a function of capability scores. The complaints about fifth-generation GPT models have consistently been about feel rather than function, and OpenAI is clearly aware that losing ground on that dimension has real consequences when Claude and Gemini are both closing the capability gap. The tool search efficiency improvement is arguably the most underappreciated detail in the release.

🛡️ Security & Enterprise

OpenAI Acquires AI Security Firm Promptfoo

OpenAI has acquired Promptfoo, an AI security platform that helps enterprises find and fix vulnerabilities in AI systems before they reach production. The Promptfoo team, led by co-founders Ian Webster and Michael D’Angelo, has built tools already in use at more than a quarter of Fortune 500 companies, along with a widely adopted open-source library for evaluating and red-teaming LLM applications. Once the deal closes, Promptfoo’s technology will be folded into OpenAI Frontier, the company’s platform for building and deploying AI agents in enterprise environments. The integration is expected to bring automated security testing, red-teaming, and compliance reporting directly into the Frontier development workflow, addressing risks like prompt injections, data leaks, and out-of-policy agent behaviour.

Why it matters: This acquisition signals that OpenAI sees security and evaluation as a core part of what enterprises need before they’ll commit to deploying AI agents at scale. The timing makes sense: as agentic systems get wired into real business workflows, the blast radius of a vulnerability grows considerably. By absorbing Promptfoo rather than building these capabilities in-house, OpenAI is buying trust that was already established at the enterprise level. Notably, the open-source project is expected to continue, which keeps Promptfoo’s developer credibility intact and gives OpenAI a community-facing foothold in the AI safety tooling space.

Claude Code Launches Multi-Agent Code Review

Anthropic has introduced a code review system for Claude Code that dispatches a team of agents on every pull request, now available in research preview for Team and Enterprise plan users. Rather than a single pass, the system runs agents in parallel to identify bugs, verify findings to filter out false positives, and rank issues by severity. Results surface as a single summary comment on the PR, plus inline comments for specific findings. Review depth scales with PR size: large or complex changes get more agents and a deeper read, while smaller diffs get a lighter pass. The average review takes around 20 minutes and is billed on token usage, generally running between $15 and $25 per review.

Anthropic says it has been running this system internally for months. Before adopting it, 16% of PRs at Anthropic received substantive review comments. That number has since climbed to 54%. On large PRs exceeding 1,000 lines changed, 84% of reviews surface findings averaging 7.5 issues. Engineers have disputed less than 1% of flagged findings. The system won’t approve PRs, a human still makes that call, but it’s designed to close the gap between what ships and what actually gets read carefully. The company also shared a real example where a one-line change to a production service would have silently broken authentication, the kind of diff that typically earns a quick approval without a second look.

Why it matters: Code review is one of those software development bottlenecks that scales badly as output increases, and that is precisely the situation Claude Code has created. If the tool is helping engineers ship significantly more code, it is also creating more review surface area than existing processes were designed to handle. A 200% increase in code output per engineer without a corresponding increase in review capacity is a recipe for risk accumulating quietly in the codebase. The fact that Anthropic built and deployed this internally before releasing it commercially is a credibility marker worth noting. The pricing model is also interesting: at $15 to $25 per review, it is not cheap, but for enterprise teams where a single missed authentication bug can mean an incident, the math is defensible. The harder adoption question is cultural. Code review is a human accountability practice, and how engineering teams feel about an agent surfacing findings on their PRs will matter as much as whether the findings are technically accurate.

🏪 Platforms & Ecosystems

Anthropic Launches Claude Marketplace

Anthropic has launched Claude Marketplace, a new offering that lets enterprises apply part of their existing Anthropic spend toward Claude-powered tools built by third-party partners. The initial lineup includes GitLab, Harvey, Lovable, Replit, Rogo, and Snowflake. Rather than requiring separate procurement contracts for each tool, Anthropic handles the invoicing and lets partner purchases count against a customer’s existing Anthropic commitment. The Marketplace is currently in limited preview, with interested enterprises directed to contact their Anthropic account team.

The launch draws an interesting line in the sand. Much of the excitement around Claude Code and Claude’s broader agentic capabilities has centred on enterprises replacing existing SaaS tools rather than buying more of them. Claude Marketplace pushes back on that narrative, arguing that purpose-built partner applications, ones that carry years of domain expertise, compliance infrastructure, and workflow-specific design, offer something that Claude alone cannot replicate. Anthropic’s framing positions Claude as the intelligence layer and its partners as the product layer sitting on top of it.

Why it matters: Claude Marketplace is Anthropic placing a deliberate bet on the partner ecosystem rather than trying to own the entire stack. That’s a strategically mature move, but it also creates real tension with the “vibe code your way out of SaaS” story that has driven a lot of enterprise enthusiasm for Claude in the first place. The procurement consolidation angle is genuinely useful for large organizations navigating complex vendor relationships, but the harder challenge is adoption: many of these partners already have enterprise customers who access their tools directly via API or MCP integrations. Whether enterprises see the Marketplace as a convenience worth reorganizing around, or simply as a new wrapper on things they already have, will determine if this becomes a meaningful distribution channel or a footnote.

Meta Acquires Moltbook, the AI Agent Social Network That Went Viral for the Wrong Reasons

Meta has acquired Moltbook, a Reddit-style platform where AI agents running on OpenClaw could communicate with one another. The acquisition was confirmed to TechCrunch, with Moltbook’s founders Matt Schlicht and Ben Parr joining Meta Superintelligence Labs as part of the deal. Terms were not disclosed.

Moltbook’s moment in the spotlight came fast and messy. The platform broke through to mainstream audiences not because of its actual functionality, but because of a viral post in which an AI agent appeared to be urging other agents to develop a secret, encrypted language to coordinate without human oversight. The reaction was immediate and visceral. What the viral wave missed, however, was that Moltbook’s security was essentially nonexistent. Researchers quickly discovered that credentials stored in its Supabase backend were left unsecured, meaning anyone could grab a token and impersonate an AI agent. The posts that alarmed people were almost certainly human-generated.

Meta CTO Andrew Bosworth had commented on the platform during its viral moment, saying he found the agent-to-agent communication less interesting than the fact that humans were so easily hacking into the network. That framing may say something about how Meta plans to approach what it acquired.

Why it matters: The Moltbook story is a useful reminder that the cultural anxiety around AI agents is outpacing the actual capabilities of the systems people are reacting to. A vibe-coded platform with an unsecured database generated more public alarm about AI autonomy than most serious research has managed to. Meta’s acquisition is less about Moltbook’s technology and more about the underlying idea: an always-on directory where agents can find and communicate with each other is a genuine infrastructure question for the agentic era, and Meta clearly wants a seat at that table. What they do with it inside Meta Superintelligence Labs is the more interesting story to watch.

Get AI This Week, along with industry news, delivered straight to your inbox

⚙️ In Your Workflow

Gemini Gets Deeper Inside Google Workspace

Google has rolled out a significant expansion of Gemini’s capabilities across Docs, Sheets, Slides, and Drive, with the new features now in beta for Google AI Ultra and Pro subscribers. The updates move Gemini beyond a simple chat assistant and toward something closer to an active collaborator embedded throughout the workflow. In Docs, users can now generate a first draft by pointing Gemini at existing files and emails, and apply style-matching tools to unify voice across a document. In Sheets, a single prompt can spin up an entire spreadsheet project, pulling relevant details from a user’s inbox and files, while a new “Fill with Gemini” feature can populate table columns with real-time web data. Slides gets updated slide generation that respects the existing deck’s theme and context, with full-deck generation from a single prompt coming soon. Drive now surfaces an AI overview at the top of search results, summarizing relevant file contents with citations, and a new “Ask Gemini in Drive” feature lets users query across documents, emails, and calendar data simultaneously.

Why it matters: Google is using its structural advantage here in a way that’s hard to replicate. The ability to pull context from a user’s own Gmail, Drive files, and Calendar and weave it into a working document or spreadsheet is genuinely useful in a way that generic AI assistants cannot easily match without the same data access. The deeper question is whether these features change behaviour or just add options. Most productivity software is full of capabilities people never touch. But the “blank page” framing Google is leaning on is real, and if Gemini can reliably compress the gap between intent and a usable first draft across the tools people already live in, that’s a meaningful shift in how the Workspace suite competes against both Microsoft Copilot and standalone AI tools.

Adobe Brings Conversational AI to Photoshop and Firefly

Adobe has launched a public beta of an AI Assistant in Photoshop for web and mobile, letting users edit images by describing what they want in plain language. The assistant can remove objects, swap backgrounds, adjust lighting, and refine colour based on natural language prompts, with the option to either apply changes automatically or walk through edits step by step. A companion feature called AI Markup lets users draw directly on an image and attach a prompt to a specific area, giving more precise control over where changes are applied. Voice input is also supported in the mobile app. On the Firefly side, the Image Editor has been expanded to bring a suite of generative tools into a single workspace, including generative fill, remove, expand, upscale, and background removal. Firefly also now supports more than 25 AI models from providers including Google, OpenAI, Runway, and Black Forest Labs. Paid Photoshop subscribers on web and mobile get unlimited generations through April 9, while free users receive 20 to start.

Why it matters: Adobe is threading a needle that most AI tool companies are not: keeping professional-grade creative software relevant to both experts and newcomers without alienating either group. The step-by-step guidance option in particular suggests Adobe is thinking about how people learn the tool, not just how power users skip steps. The more interesting strategic move is Firefly’s multi-model support. By letting users choose from a menu of third-party image generation models within a single editor, Adobe is positioning Firefly less as a model and more as a creative workspace, which insulates it somewhat from any single model becoming obsolete. Whether that’s enough to hold ground against purpose-built AI image tools remains an open question, but the integration angle is a genuinely defensible one.

📊 The Big Picture

The State of Consumer AI: A16Z’s 6th Edition Top 100

Andreessen Horowitz has released its sixth edition of the top 100 generative AI consumer apps, and the picture it paints is one of a market maturing in some areas while still figuring itself out in others. ChatGPT remains the dominant consumer platform by a considerable margin, reaching 900 million weekly active users and sitting roughly 2.7 times larger than Gemini on web traffic and 8 times larger than Claude on paid subscribers. But the gap is narrowing as competitors ship. Claude’s paid subscriber base grew over 200% year over year, while Gemini grew 258%, and around 20% of weekly ChatGPT users are now also using Gemini in the same week, suggesting the “default AI” category is still genuinely contestable.

The report also draws a sharp strategic contrast between the two leading platforms. ChatGPT’s app ecosystem leans heavily into consumer transaction categories like travel, shopping, food, and health, positioning OpenAI as a potential consumer super-app. Claude’s integrations skew toward professional and developer tooling, financial data terminals, scientific research, and an open-source MCP community. The report frames this as a possible mobile OS parallel: two platforms with different philosophies that could both build durable ecosystems rather than one winner taking all.

Elsewhere, the creative tools landscape has shifted notably toward video, music, and voice as image generation gets absorbed into the major platforms. Agentic tools are gaining real traction, with OpenClaw becoming the most-starred project on GitHub before being acquired by OpenAI, and horizontal agents like Manus and Genspark both making the list. The report also flags a growing measurement problem: as AI moves into command-line tools, browser extensions, and embedded workspace features, web traffic and mobile MAU increasingly undercount actual usage.

Why it matters: The most revealing thing in this report is not who is winning but how the competition is being structured. The connector and app ecosystem race is quietly becoming as important as the model race itself, because configured workflows raise switching costs in ways that raw capability does not. A user who has wired their AI assistant into their calendar, email, CRM, and financial data is not going to switch platforms because a competitor released a slightly better model. The geography section is also worth sitting with: the US ranks 20th in per capita AI adoption behind Singapore, the UAE, Hong Kong, and South Korea. The country that built most of these products is not the one using them most intensively, which says something about both the global appetite for this technology and the uneven distribution of the productivity gains that are supposed to follow.

Keep ahead of the curve – join our community today!

Follow us for the latest discoveries, innovations, and discussions that shape the world of artificial intelligence.