The democratization of AI capabilities accelerated dramatically this week as multiple companies chose to open-source sophisticated models that previously represented their competitive advantage. Roblox’s decision to release “Cube,” its 3D generation model, and Sesame’s sharing of the technology behind its realistic voice assistant, Maya, mark important milestones in making advanced AI more widely available. These moves come as Google enhances its Vertex AI platform with voice capabilities, Baidu positions itself against emerging Chinese AI leaders, and Zoom transforms its assistant into a true task-oriented agent.

Roblox Releases Open-Source 3D Generation Model “Cube”

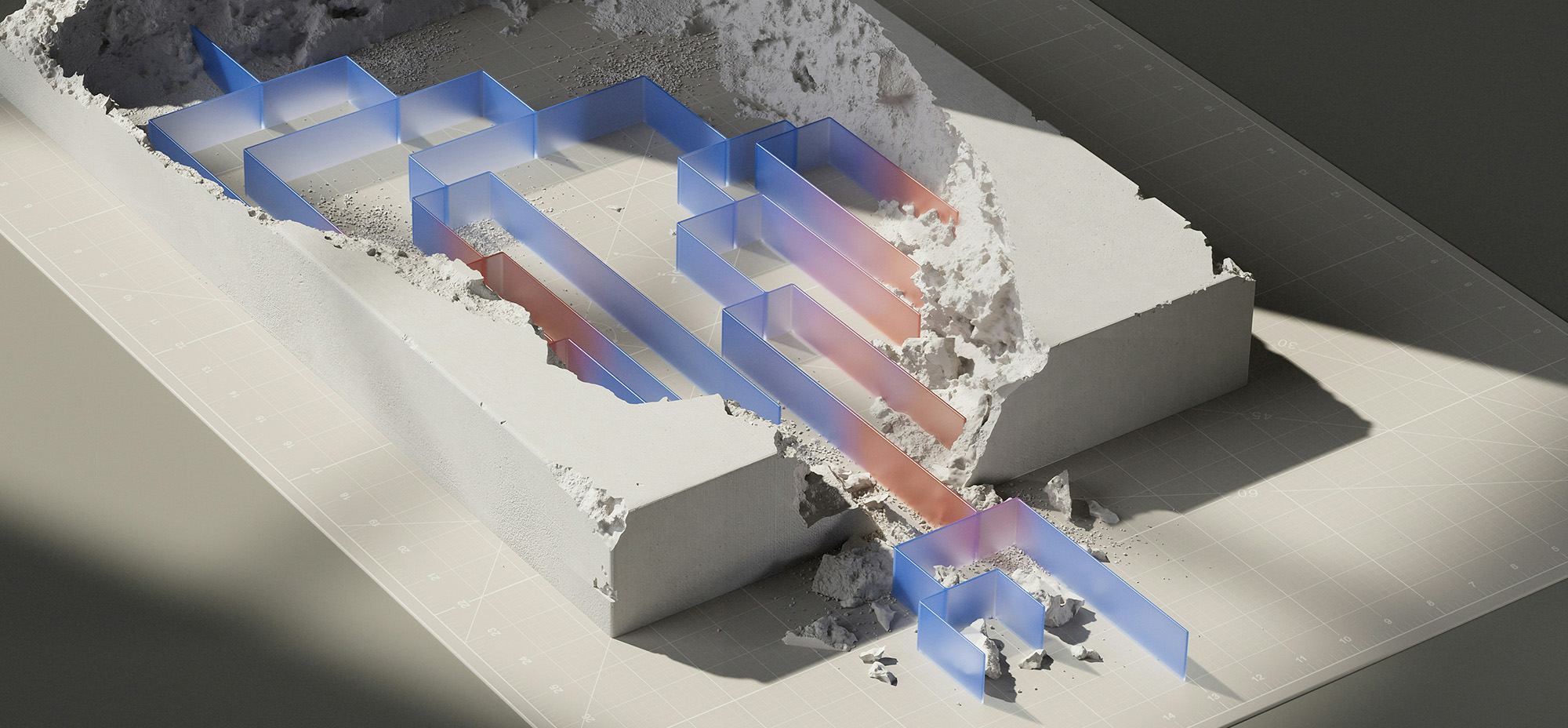

Roblox has just announced the release of “Cube,” their new 3D model generation tool powered by AI. The first iteration of Cube focuses on mesh generation, allowing creators to produce 3D representations of objects using simple text prompts. For example, users can type “generate an orange racing car with black stripes” to create a fully formed 3D object that can then be further refined within Roblox Studio.

Roblox’s decision to make Cube open-source makes this announcement particularly significant, enabling developers outside the platform to customize the model, create plugins, or train it on specialized datasets to meet their specific needs.

Expanding AI Toolkit

Beyond Cube’s 3D generation capabilities, Roblox has announced three additional AI tools that will be launched in the coming months:

- Text generation – Enabling developers to add text-based AI features to their games, including interactive conversations with non-player characters (NPCs)

- Text-to-speech – Allowing for narration, NPC dialogue, and spoken captions

- Speech-to-text – Supporting voice commands for gameplay interactions

Future Developments

Roblox isn’t stopping with basic mesh generation. The company has outlined plans to expand Cube’s capabilities to handle more complex objects and introduce scene generation. This would allow creators to generate entire environments, such as forest scenes, with the ability to modify details like changing leaf colours to reflect seasonal changes.

The long-term vision is what Roblox calls “4D creation,” where the fourth dimension represents interaction between objects, environments, and people.

Google Brings Chirp 3 Voice Model to Vertex AI Platform

Google is making a significant move in the voice AI space by adding its Chirp 3 speech-to-text and HD text-to-speech models to the Vertex AI development platform starting next week. This expansion comes as voice interfaces gain momentum in the generative AI landscape, which text-based interactions have previously dominated.

The integration of Chirp 3 into Vertex AI positions Google alongside other companies making rapid advances in voice technology. Just last week, Google quietly announced that Chirp 3 would be adding eight new voices across 31 languages, expanding its capabilities for developers.

Use Cases and Applications

Developers will be able to leverage Chirp 3 for various applications, including:

– Building voice assistants

– Creating audiobooks

– Developing support agents

– Producing voice-overs for videos

Vertex AI Ecosystem

Chirp 3 joins Google’s growing AI portfolio on Vertex AI, which includes:

– Recent versions of the Gemini LLM

– The Imagen image-generation model

– The premium Veo 2 video generation tool

Google’s Vertex AI platform, launched in 2021, has evolved significantly since the explosion of generative AI triggered by OpenAI’s GPT services. The addition of Chirp 3 represents another step in Google’s strategy to compete with Microsoft and Amazon in providing comprehensive AI development tools.

China’s Baidu Launches New AI Models Amid Intensifying Competition

Baidu, one of China’s leading tech giants, has announced the launch of two new artificial intelligence models as it works to strengthen its position in an increasingly competitive AI landscape.

The company unveiled ERNIE X1, a reasoning-focused model, and ERNIE 4.5, an advanced foundation model with improved multimodal capabilities. According to Baidu, the ERNIE X1 delivers “performance on par with DeepSeek R1 at only half the price,” positioning it as a cost-effective alternative in the high-end AI model market.

ERNIE X1: A Cost-Efficient Reasoning Model

Baidu claims that ERNIE X1 is the “first deep thinking model that uses tools autonomously” and features enhanced capabilities in understanding, planning, reflection, and evolution. This positions the model directly against DeepSeek’s offerings, which have gained attention for providing performance comparable to industry-leading U.S. models at significantly lower costs.

ERNIE 4.5: Advanced Multimodal Capabilities

The ERNIE 4.5 foundation model emphasizes multimodal understanding, with Baidu highlighting its “excellent multimodal understanding ability” and “advanced language ability.” The company also notes that the model has “high EQ,” making it better at understanding internet memes and satirical cartoons—potentially addressing more nuanced cultural content that other models might struggle with.

Competitive Landscape

Baidu’s new releases come at a critical time in China’s AI development race. Despite being one of the earliest Chinese tech giants to launch a ChatGPT-style chatbot, Baidu has faced challenges in achieving widespread adoption for its Ernie large language model. The emergence of DeepSeek, a Chinese AI startup whose models reportedly match or exceed U.S. industry leaders at significantly lower costs, has intensified competition.

Zoom’s AI Companion Gets “Agentic” Capabilities to Handle Administrative Tasks

Zoom is upgrading its AI Companion with new “agentic” capabilities that will allow it to identify and perform tasks on behalf of users. This enhancement, set to launch at the end of March, aims to reduce administrative workload by automating common busywork.

New Task Management Features

The upgraded AI Companion will be accessible through a dedicated Tasks tab in Zoom Workplace. Users will be able to delegate various functions to the AI, including:

– Scheduling follow-up meetings

– Generating documents from meeting content

– Creating video clips for sharing or review

Additional AI Enhancements

Beyond the core agentic capabilities, Zoom is enhancing its platform with additional AI features. Starting this month, Zoom Workplace mobile app users will gain access to a new voice recorder explicitly designed for in-person meetings. This tool goes beyond simple recording by automatically transcribing and summarizing the content, making it easier to reference important discussions later.

Looking ahead to May, Zoom will introduce live notes functionality that generates real-time summaries during Zoom meetings and phone calls. This feature aims to help participants stay focused on the conversation while the AI captures key points and action items.

Custom AI Features Coming Soon

In April, Zoom plans to launch its Custom AI Companion add-on for $12 per month, which will include a custom avatar feature that generates an AI version of the user for team communications and generic avatar templates (available at no added cost).

Sesame Opens Up the Technology Behind Its Realistic Voice Assistant Maya

Sesame, the AI company that captured public attention with its remarkably lifelike voice assistant Maya, has made a significant move by releasing its underlying AI model to the public. The company has open-sourced CSM-1B, the base model powering their viral assistant technology, under the Apache 2.0 license—making it available for commercial use with minimal restrictions.

Technical Specifications

The released model, sized at 1 billion parameters, is designed to generate “RVQ audio codes” from both text and audio inputs. RVQ (residual vector quantization) is a technique for encoding audio into discrete tokens that has become increasingly common in advanced AI audio technologies. Similar approaches have been used in Google’s SoundStream and Meta’s Encodec.

Architecturally, CSM-1B combines a model from Meta’s Llama family as its foundation with a specialized audio “decoder” component. According to Sesame, a fine-tuned version of this base model is what actually powers the Maya assistant, which went viral for its naturalistic speech patterns.

Limitations and Concerns

The model comes with virtually no technical safeguards, relying instead on an honour system where Sesame simply asks users not to:

– Mimic someone’s voice without consent

– Create misleading content like fake news

– Engage in “harmful” or “malicious” activities

This release comes amid growing concerns about voice cloning technology. Consumer Reports recently highlighted that many popular AI voice cloning tools lack “meaningful” safeguards against potential fraud or abuse.

About Sesame

Sesame gained widespread attention in late February for its assistant technology that approaches human-like realism. Both Maya and Miles, Sesame’s voice assistants, incorporate human-like speech patterns, including breaths and disfluencies, and can be interrupted mid-sentence—similar to OpenAI’s Voice Mode but with even more naturalistic qualities.

Beyond voice assistants, Sesame has revealed that it’s developing AI glasses “designed to be worn all day” that will incorporate the company’s custom models, suggesting ambitious plans to expand its AI technology into wearable devices.

Keep ahead of the curve – join our community today!

Follow us for the latest discoveries, innovations, and discussions that shape the world of artificial intelligence.